How do you fix Heteroscedasticity

Transform the dependent variable. One way to fix heteroscedasticity is to transform the dependent variable in some way. … Redefine the dependent variable. Another way to fix heteroscedasticity is to redefine the dependent variable. … Use weighted regression.

How do you handle Heteroscedastic data?

- Give data that produces a large scatter less weight.

- Transform the Y variable to achieve homoscedasticity. For example, use the Box-Cox normality plot to transform the data.

What is heteroscedasticity in regression?

What is Heteroskedasticity? Heteroskedasticity refers to situations where the variance of the residuals is unequal over a range of measured values. … If there is an unequal scatter of residuals, the population used in the regression contains unequal variance, and therefore the analysis results may be invalid.

Which is the best practice to deal with Heteroskedasticity?

The solution. The two most common strategies for dealing with the possibility of heteroskedasticity is heteroskedasticity-consistent standard errors (or robust errors) developed by White and Weighted Least Squares.What is heteroscedasticity in econometrics?

As it relates to statistics, heteroskedasticity (also spelled heteroscedasticity) refers to the error variance, or dependence of scattering, within a minimum of one independent variable within a particular sample.

What are the possible causes of Heteroscedasticity?

Heteroscedasticity is mainly due to the presence of outlier in the data. Outlier in Heteroscedasticity means that the observations that are either small or large with respect to the other observations are present in the sample. Heteroscedasticity is also caused due to omission of variables from the model.

How do you test for heteroskedasticity?

There are three primary ways to test for heteroskedasticity. You can check it visually for cone-shaped data, use the simple Breusch-Pagan test for normally distributed data, or you can use the White test as a general model.

What is Homoscedasticity in econometrics?

Homoskedastic (also spelled “homoscedastic”) refers to a condition in which the variance of the residual, or error term, in a regression model is constant. That is, the error term does not vary much as the value of the predictor variable changes.How bad is heteroskedasticity?

Heteroskedasticity has serious consequences for the OLS estimator. Although the OLS estimator remains unbiased, the estimated SE is wrong. Because of this, confidence intervals and hypotheses tests cannot be relied on.

How does Heteroskedasticity affect standard errors and how do we fix that?Heteroscedasticity does not cause ordinary least squares coefficient estimates to be biased, although it can cause ordinary least squares estimates of the variance (and, thus, standard errors) of the coefficients to be biased, possibly above or below the true of population variance.

Article first time published onHow is Heteroscedasticity detected what are the remedial measures of Heteroscedasticity discuss?

If V ( μ i ) = σ i 2 then heteroscedasticity is present. Given the values of σ i 2 heteroscedasticity can be corrected by using weighted least squares (WLS) as a special case of Generalized Least Square (GLS). Weighted least squares is the OLS method of estimation applied to the transformed model.

Why is the heteroscedasticity test done?

It is used to test for heteroskedasticity in a linear regression model and assumes that the error terms are normally distributed. It tests whether the variance of the errors from a regression is dependent on the values of the independent variables.

What are heteroskedasticity robust standard errors?

“Robust” standard errors is a technique to obtain unbiased standard errors of OLS coefficients under heteroscedasticity. Remember, the presence of heteroscedasticity violates the Gauss Markov assumptions that are necessary to render OLS the best linear unbiased estimator (BLUE).

Which of the one is true about Heteroskedasticity?

Which of the one is true about Heteroskedasticity? The presence of non-constant variance in the error terms results in heteroskedasticity. Generally, non-constant variance arises because of presence of outliers or extreme leverage values. You can refer this article for more detail about regression analysis.

What is dummy trap?

The Dummy variable trap is a scenario where there are attributes that are highly correlated (Multicollinear) and one variable predicts the value of others. When we use one-hot encoding for handling the categorical data, then one dummy variable (attribute) can be predicted with the help of other dummy variables.

How can Homoscedasticity be prevented?

Another approach for dealing with heteroscedasticity is to transform the dependent variable using one of the variance stabilizing transformations. A logarithmic transformation can be applied to highly skewed variables, while count variables can be transformed using a square root transformation.

What are dummies in statistics?

In statistics and econometrics, particularly in regression analysis, a dummy variable is one that takes only the value 0 or 1 to indicate the absence or presence of some categorical effect that may be expected to shift the outcome.

How do you test for multicollinearity in regression?

The second method to check multi-collinearity is to use the Variance Inflation Factor(VIF) for each independent variable. It is a measure of multicollinearity in the set of multiple regression variables. The higher the value of VIF the higher correlation between this variable and the rest.

What problem does Heteroskedasticity cause for the OLS estimators show mathematically?

A nonconstant error variance, heteroscedasticity, causes the OLS estimates to be inefficient, and the usual OLS covariance matrix, ∑, is generally invalid: (6.22) for some, j > 1.

How do you test for heteroscedasticity in SPSS?

- Activate SPSS program, then click Variable View, then on the Name write X1, X2, and Y.

- Then click Data View, then enter the value for each variable.

- Next step click Analyze – Regression – Linear …

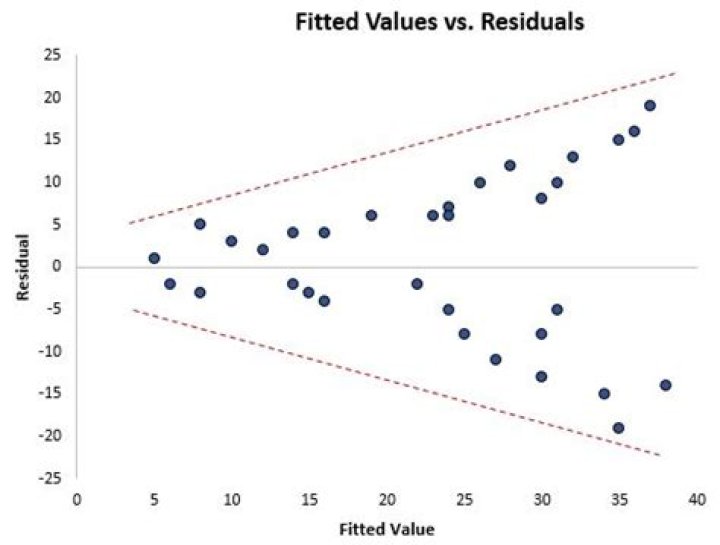

What does Heteroscedasticity look like?

Heteroscedasticity produces a distinctive fan or cone shape in residual plots. … Typically, the telltale pattern for heteroscedasticity is that as the fitted values increases, the variance of the residuals also increases. You can see an example of this cone shaped pattern in the residuals by fitted value plot below.

Why is Homoskedasticity important?

Homoscedasticity, or homogeneity of variances, is an assumption of equal or similar variances in different groups being compared. This is an important assumption of parametric statistical tests because they are sensitive to any dissimilarities. Uneven variances in samples result in biased and skewed test results.

What does Homoscedasticity look like?

So when is a data set classified as having homoscedasticity? The general rule of thumb1 is: If the ratio of the largest variance to the smallest variance is 1.5 or below, the data is homoscedastic.

What are the formal and informal methods of detecting Heteroscedasticity?

Detecting Heteroskedasticity There are two ways in general. The first is the informal way which is done through graphs and therefore we call it the graphical method. The second is through formal tests for heteroskedasticity, like the following ones: The Breusch-Pagan LM Test.

How can Multicollinearity be corrected?

- Remove some of the highly correlated independent variables.

- Linearly combine the independent variables, such as adding them together.

- Perform an analysis designed for highly correlated variables, such as principal components analysis or partial least squares regression.

What can usually be done to correct for non normal residuals?

You can solve the problem with heteroscedasticity of residuals using WLSE, but it is key to select a suitable weighting function. If your weighting function is not good enough, then the performance of your model will be poor, The sample size of your data sets varies from 70 to 150.

What is econometrics specification error?

In the context of a statistical model, specification error means that at least one of the key features or assumptions of the model is incorrect. … Some forms of misspecification will result in misleading estimates of the parameters, and other forms will result in misleading confidence intervals and test statistics.

Does IID imply Homoskedasticity?

Viewed in this light, the “i.i.d. assumption” has some implications for the marginal distribution of the errors, as well as for autocorrelation (it implies no-autocorrelation of the errors), but it does not cover conditional homo/heteroskedasticity.

Does heteroskedasticity increase standard error?

Only if there is heteroskedasticity will the “normal” standard error be inappropriate, which means that the White Standard Error is appropriate with or without heteroskedasticity, that is, even when your model is homoskedastic.