What is decision tree and example

A decision tree is a very specific type of probability tree that enables you to make a decision about some kind of process. For example, you might want to choose between manufacturing item A or item B, or investing in choice 1, choice 2, or choice 3.

What is the decision tree?

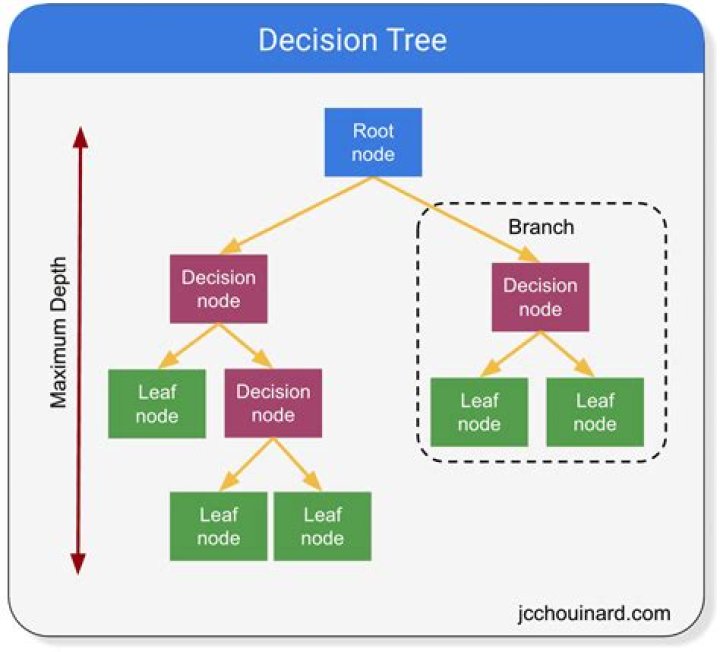

A decision tree is a flowchart-like structure in which each internal node represents a “test” on an attribute (e.g. whether a coin flip comes up heads or tails), each branch represents the outcome of the test, and each leaf node represents a class label (decision taken after computing all attributes).

What are decision trees commonly used for?

A Decision Tree is a supervised machine learning algorithm that can be used for both Regression and Classification problem statements. It divides the complete dataset into smaller subsets while at the same time an associated Decision Tree is incrementally developed.

Why is decision tree used?

Decision trees provide an effective method of Decision Making because they: … Allow us to analyze fully the possible consequences of a decision. Provide a framework to quantify the values of outcomes and the probabilities of achieving them.What is decision tree in AI?

A Decision tree is the denotative representation of a decision-making process. Decision trees in artificial intelligence are used to arrive at conclusions based on the data available from decisions made in the past. … Therefore, decision tree models are support tools for supervised learning.

What is a decision tree how a decision tree works?

A decision tree is a graphical representation of all possible solutions to a decision based on certain conditions. On each step or node of a decision tree, used for classification, we try to form a condition on the features to separate all the labels or classes contained in the dataset to the fullest purity.

How a decision tree reaches its decision?

Explanation: A decision tree reaches its decision by performing a sequence of tests.

What is true decision tree?

Decision Trees are one of the most respected algorithm in machine learning and data science. They are transparent, easy to understand, robust in nature and widely applicable. You can actually see what the algorithm is doing and what steps does it perform to get to a solution.What are the advantages and disadvantages of decision trees?

Advantages and Disadvantages of Decision Trees in Machine Learning. Decision Tree is used to solve both classification and regression problems. But the main drawback of Decision Tree is that it generally leads to overfitting of the data.

What is decision tree in AI class 9?As you know the decision tree is an example of a rule-based approach. The structure of decision starts with the root node and ends with leaves by connecting branches having different conditions.

Article first time published onWhat is decision tree in ML?

A decision tree is a flowchart-like structure in which each internal node represents a test on a feature (e.g. whether a coin flip comes up heads or tails) , each leaf node represents a class label (decision taken after computing all features) and branches represent conjunctions of features that lead to those class …

Where is decision trees used in AI?

A decision tree is one of the supervised machine learning algorithms. This algorithm can be used for regression and classification problems — yet, is mostly used for classification problems. A decision tree follows a set of if-else conditions to visualize the data and classify it according to the conditions.

What is difference between decision tree and random forest?

A decision tree combines some decisions, whereas a random forest combines several decision trees. Thus, it is a long process, yet slow. Whereas, a decision tree is fast and operates easily on large data sets, especially the linear one. The random forest model needs rigorous training.

How do you grow a decision tree?

Decision tree growing is done by creating a decision tree from a data set. Splits are selected, and class labels are assigned to leaves when no further splits are required or possible. The growing starts from a single root node, where a table that contains a training data set is used as input table.

What are the types of decision tree?

There are 4 popular types of decision tree algorithms: ID3, CART (Classification and Regression Trees), Chi-Square and Reduction in Variance.

What is exciting AI?

Artificial Intelligence enhances the speed, precision and effectiveness of human efforts. In financial institutions, AI techniques can be used to identify which transactions are likely to be fraudulent, adopt fast and accurate credit scoring, as well as automate manually intense data management tasks.

How do you create a decision tree in data mining?

Constructing a decision tree is all about finding attribute that returns the highest information gain (i.e., the most homogeneous branches). Step 1: Calculate entropy of the target. Step 2: The dataset is then split on the different attributes. The entropy for each branch is calculated.

What is a limitation of decision trees?

One of the limitations of decision trees is that they are largely unstable compared to other decision predictors. A small change in the data can result in a major change in the structure of the decision tree, which can convey a different result from what users will get in a normal event.

Which is better logistic regression or decision tree?

If you’ve studied a bit of statistics or machine learning, there is a good chance you have come across logistic regression (aka binary logit).

Why are decision tree classifiers so popular?

Why are decision tree classifiers so popular ? Decision tree construction does not involve any domain knowledge or parameter setting, and therefore is appropriate for exploratory knowledge discovery. Decision trees can handle multidimensional data.