What is lasso regression used for

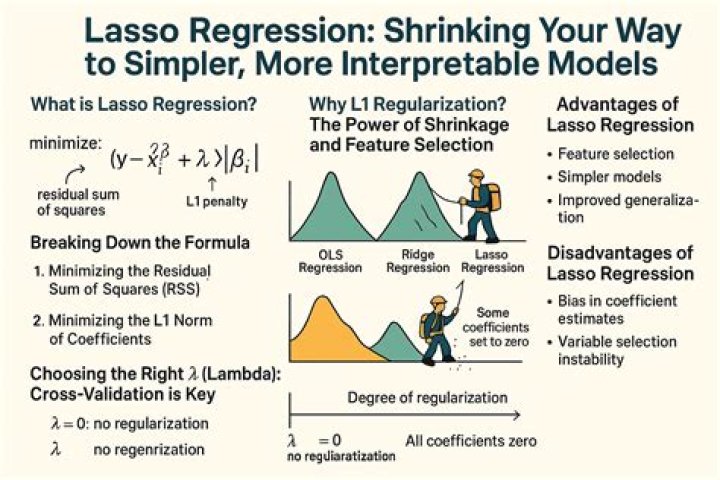

Lasso regression is a regularization technique. It is used over regression methods for a more accurate prediction. This model uses shrinkage. Shrinkage is where data values are shrunk towards a central point as the mean.

What does lasso do in Python?

Lasso Regression is an extension of linear regression that adds a regularization penalty to the loss function during training.

Why do we use Ridge and lasso regression?

Ridge and lasso regression allow you to regularize (“shrink”) coefficients. This means that the estimated coefficients are pushed towards 0, to make them work better on new data-sets (“optimized for prediction”). This allows you to use complex models and avoid over-fitting at the same time.

How does a lasso model work?

Lasso regression is a type of linear regression that uses shrinkage. Shrinkage is where data values are shrunk towards a central point, like the mean. The lasso procedure encourages simple, sparse models (i.e. models with fewer parameters).Can lasso be used for classification?

You can use the Lasso or elastic net regularization for generalized linear model regression which can be used for classification problems. Here data is the data matrix with rows as observations and columns as features.

What is Lasso machine learning?

In statistics and machine learning, lasso (least absolute shrinkage and selection operator; also Lasso or LASSO) is a regression analysis method that performs both variable selection and regularization in order to enhance the prediction accuracy and interpretability of the resulting statistical model.

Why lasso can be used for feature selection?

How can we use it for feature selection? Trying to minimize the cost function, Lasso regression will automatically select those features that are useful, discarding the useless or redundant features. In Lasso regression, discarding a feature will make its coefficient equal to 0.

Is a lasso a weapon?

Although the use of the lasso itself is difficult to confirm, the lasso was most likely used to assist hunting, domestication of animals, and slavery. As a weapon, the lasso is thrown with the intent of the noose catching around the opponent’s neck.Why lasso can be applied to solve the overfitting problem?

Lasso Regression adds “absolute value of slope” to the cost function as penalty term . In addition to resolve Overfitting issue ,lasso also helps us in feature selection by removing the features having slope very less or near to zero i.e features having less importance. (keep in mind slope will not be exactly zero).

Is lasso convex?Convexity Both the sum of squares and the lasso penalty are convex, and so is the lasso loss function. … However, the lasso loss function is not strictly convex. Consequently, there may be multiple β’s that minimize the lasso loss function.

Article first time published onIs lasso better than Ridge?

Lasso method overcomes the disadvantage of Ridge regression by not only punishing high values of the coefficients β but actually setting them to zero if they are not relevant. Therefore, you might end up with fewer features included in the model than you started with, which is a huge advantage.

Should I use lasso or ridge regression?

Lasso tends to do well if there are a small number of significant parameters and the others are close to zero (ergo: when only a few predictors actually influence the response). Ridge works well if there are many large parameters of about the same value (ergo: when most predictors impact the response).

Why is elastic net better than lasso?

Lasso will eliminate many features, and reduce overfitting in your linear model. … Elastic Net combines feature elimination from Lasso and feature coefficient reduction from the Ridge model to improve your model’s predictions.

Is Lasso regression linear or logistic?

Definition Of Lasso Regression Lasso regression is like linear regression, but it uses a technique “shrinkage” where the coefficients of determination are shrunk towards zero. Linear regression gives you regression coefficients as observed in the dataset.

What is logistic lasso?

LASSO is a penalized regression approach that estimates the regression coefficients by maximizing the log-likelihood function (or the sum of squared residuals) with the constraint that the sum of the absolute values of the regression coefficients, ∑ j = 1 k β j , is less than or equal to a positive constant s.

Can lasso be used for binary classification?

1 Answer. It is valid. Note the family=”binomial” argument which is appropriate for a classification problem.

Can LASSO be used for variable selection Why or why not What about ridge regression?

The LASSO, on the other hand, handles estimation in the many predictors framework and performs variable selection. … Both ridge regression and the LASSO can outperform OLS regression in some predictive situations – exploiting the tradeoff between variance and bias in the mean square error.

Is PCA used for feature selection?

Principal Component Analysis (PCA) is a popular linear feature extractor used for unsupervised feature selection based on eigenvectors analysis to identify critical original features for principal component. … The method generates a new set of variables, called principal components.

What is LASSO L1?

A regression model that uses L1 regularization technique is called Lasso Regression and model which uses L2 is called Ridge Regression. The key difference between these two is the penalty term. Ridge regression adds “squared magnitude” of coefficient as penalty term to the loss function.

How does lasso shrink to zero?

The lasso performs shrinkage so that there are “corners” in the constraint, which in two dimensions corresponds to a diamond. If the sum of squares “hits” one of these corners, then the coefficient corresponding to the axis is shrunk to zero. … Hence, the lasso performs shrinkage and (effectively) subset selection.

Is LASSO robust to outliers?

This sensitivity of the penalty to changes in the coefficients (and thus to outliers) means that ridge is less robust to outliers than LASSO.

Does LASSO avoid overfitting?

L1 Lasso Regression It is a Regularization Method to reduce Overfitting. It is similar to RIDGE REGRESSION except to a very important difference: the Penalty Function now is: lambda*|slope|.

Why do cowboys use lassos?

Overview. A lasso is made from stiff rope so that the noose stays open when the lasso is thrown. It also allows the cowboy to easily open up the noose from horseback to release the cattle because the rope is stiff enough to be pushed a little. A high quality lasso is weighted for better handling.

Can you lasso with any rope?

If you’re just practicing, nearly any type of rope will suffice. However, if you intend to actually use your lasso, you’ll want a thin, tough, somewhat stiff rope. Stiffness makes the rope a little harder to tie.

Who invented the lasso?

The lasso was invented by Native Americans, who used it effectively in war against the Spanish invaders. In the W United States and in parts of Latin America the lasso is a part of the equipment of a cattle herder.

Is lasso solution unique?

The lasso solution is unique when rank(X) = p, because the criterion is strictly convex. … Depending on the value of the tuning parameter λ, solutions of the lasso problem will have many coefficients set exactly to zero, due to the nature of the l1 penalty.

What is lambda in lasso?

In lasso, the penalty is the sum of the absolute values of the coefficients. … Hence, much like the best subset selection method, lasso performs variable selection. The tuning parameter lambda is chosen by cross validation. When lambda is small, the result is essentially the least squares estimates.

How is lasso solved?

The LASSO (least absolute shrinkage and selection operator) algorithm avoids the limitations, which generally employ stepwise regression with information criteria to choose the optimal model, existing in traditional methods. The improved-LARS (Least Angle Regression) algorithm solves the LASSO effectively.

What is the advantage of LASSO over Ridge?

One obvious advantage of lasso regression over ridge regression, is that it produces simpler and more interpretable models that incorporate only a reduced set of the predictors.

Is Lasso regression unsupervised?

This result is obtained by means of a two-step approach: first, a supervised regularization method for regression, namely, LASSO is applied, where a sparsity-enhancing penalty term allows the identification of the significance with which each data feature contributes to the prediction; then, an unsupervised fuzzy …

Is Ridge or LASSO faster?

It all depends on the computing power and data available to perform these techniques on a statistical software. Ridge regression is faster compared to lasso but then again lasso has the advantage of completely reducing unnecessary parameters in the model.